|

12/6/2023 0 Comments Definition of entropy

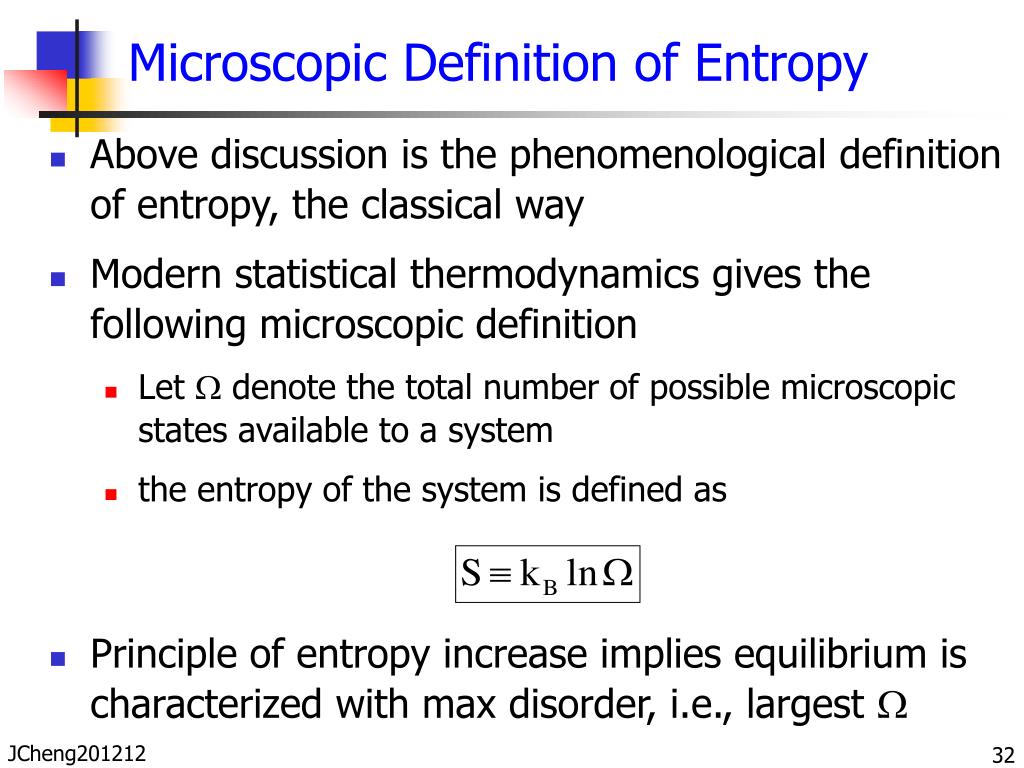

Electrochemical batteries are currently a major enabler in the energy transition however, batteries involve redox reactions during charging/discharging, thereby limiting their primary use in applications with relatively steady and ‘slow’ energy output (for example, portable electronics and electric vehicles). Generally, information entropy is the average amount of information conveyed by an event, when considering all possible outcomes. Unfortunately, in the information theory, the symbol for entropy is Hand the constant k B is absent. We have a closed system if no energy from an outside source can enter the system. This expression is called Shannon Entropy or Information Entropy. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the microscopic description of nature in statistical.

It usually refers to the idea that everything in the universe eventually moves from order to disorder, and entropy is the measurement of that change. Entropy is a scientific concept, as well as a measurable physical property, that is most commonly associated with a state of disorder, randomness, or uncertainty. In science, entropy is used to determine the amount of disorder in a closed system. The idea of entropy comes from a principle of thermodynamics dealing with energy. There is broad demand to accelerate the transition to more efficient, less polluting, and renewable energy sources such as solar and wind energy, but these sources often cannot produce energy all the time. Entropy is a measure of the amount of energy that is unavailable to do work in a closed system. Recovering the stored energy requires breaking and forming new chemical bonds (typically via combustion) and, while this can be effective, it can also produce pollution that contributes to climate change. Although all forms of energy can be used to do work, it is not possible to use the entire available energy for work. The more disordered a system and higher the entropy, the less of a system's energy is available to do work. Entropy also describes how much energy is not available to do work.

The challenge of storing energy is not new, and for generations, we have relied on chemical approaches (that is, energy is stored in the arrangement of atoms in molecules, as in carbon-based fuels) to this end. Entropy is a measure of the disorder of a system. We face a colossal energy storage problem with analysts predicting a more than 120-times increase in global energy storage needs by 2040 1. Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic quantities.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed